Overview #

This was the project where things clicked. Third block of my first year, and for the first time I wasn’t just reading about model training — I was doing it. Look-Out-Traffic is a traffic sign recognition system that classifies priority road signs using a CNN powered by transfer learning with EfficientNetB0. It achieved 100% test accuracy across five sign classes, surpassing human-level performance of 92.5%.

More than the numbers, this project introduced me to computer vision — a field that became one of my main interests and continues to shape the direction of my work today.

The Problem #

Autonomous vehicles depend on Traffic Sign Recognition (TSR) to make safe, real-time decisions. But current systems — even from companies like Tesla, Mobileye, and Volvo — still struggle in challenging conditions: poor lighting, adverse weather, damaged or obstructed signs.

Add distracted driving to the mix — responsible for over 3,000 fatalities in Europe in 2019 — and the need becomes clear: vehicles need to recognize signs reliably even when the driver isn’t paying attention.

Look-Out-Traffic focuses on priority signs specifically, because they carry the most immediate consequences: stop, yield, or go.

Approach #

Building the dataset from scratch — I manually collected 90% of the 702 images using Mapillary and Google Street View, screenshot by screenshot. The remaining 10% came from public datasets on RoboFlow and Kaggle. Every image was cleaned, standardized to JPG, cropped to isolate the sign, and resized to 128×128px. Five classes: Full Stop, Give Way, Give Way to Oncoming Traffic, Priority Road, and Priority Over Oncoming Traffic.

It was tedious work, but it taught me something important — the quality of your data matters more than the complexity of your model.

Setting baselines — Before building anything complex, I established benchmarks: a random guess baseline of 20% and a basic MLP that reached 69%. These gave me a clear picture of what “better” actually looked like.

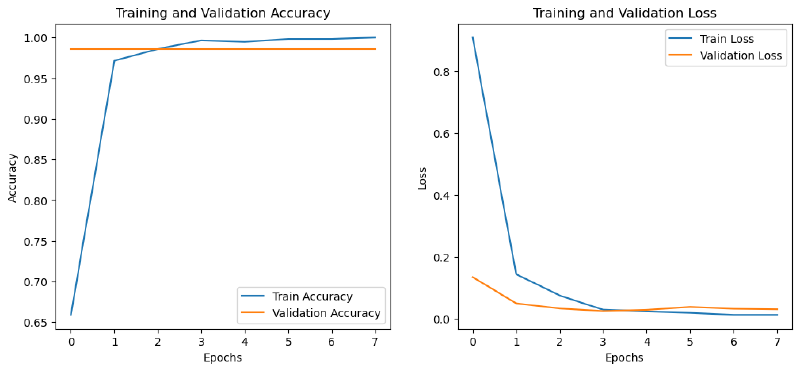

Transfer learning with EfficientNetB0 — This is where the real learning happened. I used EfficientNetB0 pre-trained on ImageNet as a feature extractor — my first hands-on experience with transfer learning. On top of it, I built custom dense layers (256 → 128 neurons), added dropout at 0.5 to control overfitting, and trained with Adam at a learning rate of 1e-4 with early stopping.

Watching the model go from random guessing to near-perfect accuracy across epochs was the moment I understood what neural networks actually do — not just in theory, but in practice.

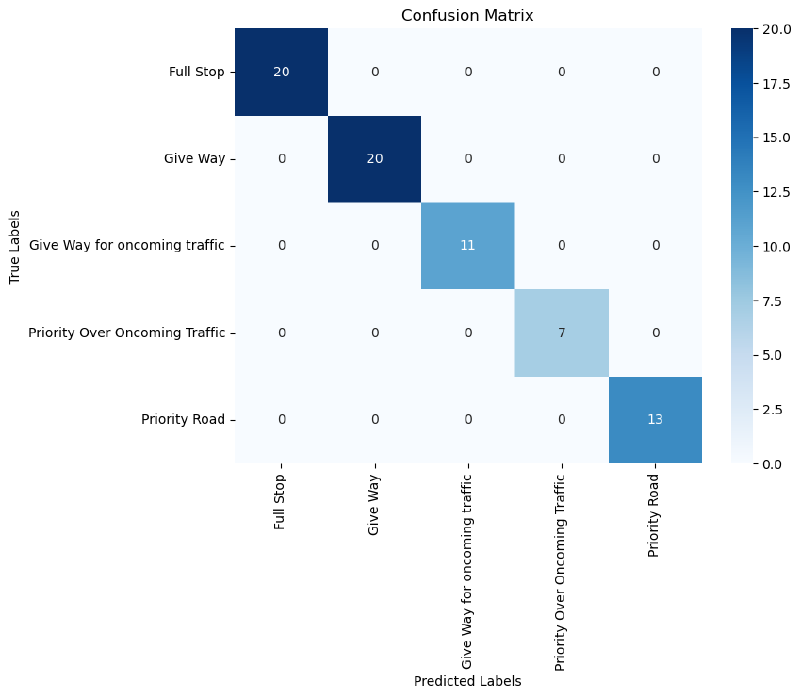

Measuring against humans — I ran a survey with 6 participants to benchmark human performance. The result: 92.5% accuracy. Stop signs were universally recognized (100%), but Priority Road signs tripped people up at 83.3%. The model outperformed humans across the board.

Key Results #

| Metric | Score |

|---|---|

| Test Accuracy | 100% |

| Test Loss | 0.0235 |

| Precision (all classes) | 1.0 |

| Recall (all classes) | 1.0 |

| F1-Score (all classes) | 1.0 |

| Human-Level Accuracy | 92.5% |

| MLP Baseline | 69% |

| Random Guess | 20% |

Error Analysis #

The final model achieved perfect test accuracy, but earlier iterations told a more interesting story:

Lighting and exposure — Shadows and glare confused the model, causing Stop signs to be misclassified as Give Way or Priority Road. A reminder that real-world conditions don’t look like clean training data.

Angle and orientation — Signs captured from the side instead of head-on were harder to classify. The model needed more diversity in viewing angles — something I’d later explore in depth during my sign language recognition research.

Class imbalance — Stop signs dominated the dataset. When uncertain, the model defaulted to the most common class. A classic bias pattern, and a lesson in why balanced datasets matter.

Business Value #

The project demonstrates that a lightweight CNN with transfer learning can achieve commercial-grade accuracy on a small, carefully curated dataset — no expensive infrastructure required. The system’s focus on priority signs addresses the most safety-critical category of road signage, and its architecture is designed to be adaptable across regional sign standards.

Reflections #

Looking back, this project was a turning point. It was my first real encounter with convolutional neural networks, transfer learning, and the full pipeline from data collection to deployment-ready evaluation. Building the dataset manually was the most time-consuming part, but it gave me an intuition for data quality that no textbook could.

The jump from 69% (MLP) to 100% (EfficientNetB0) wasn’t just a metric improvement — it showed me what’s possible when you choose the right tool for the problem. And the error analysis from earlier iterations taught me that understanding why a model fails is just as valuable as celebrating when it succeeds.

This is where my interest in computer vision started. Everything that came after — plant phenotyping, sign language recognition, object detection — traces back to this project.